What Actually Drives Brand Visibility in AI Search

Good Morning,

AI continues to show up in parts of consumer life that didn’t have it until recently: bank account integrations, memory chip supply chains, even the typing layer of your phone.

Last week, we sized the $34B GEO market and noted that most vendors are selling monitoring rather than doing the harder work of changing the answer. This week’s big picture looks at five new studies on what actually drives brand visibility in AI search.

The findings help explain why monitoring-first tools don’t move the needle: brand strength does most of the work, and the engines largely agree on which brands matter.

Let’s get into it.

Vas-

This Week’s Signals

AI & Consumer

1. Perplexity launched a finance hub for consumers. A new Plaid integration lets users connect bank accounts, credit cards, and loans directly to its Computer agent. Search engines are moving past “tell me” into “do it for me,” inserting themselves between consumers and their money. (Read more)

2. Apple’s Mac Mini and Studio went out of stock. A DRAM shortage tied to AI infrastructure demand is delaying the M5 refresh. Memory chips needed for consumer products are being absorbed by GPU clusters training and serving AI models. AI’s resource demand is now visible at the consumer electronics counter. (Read more)

3. Google made AI dictation a system feature. Voice input has been “almost good enough” for a decade. With LLMs cleaning up output in real time, “almost” becomes the default. (Read more)

AI at Large

4. Europe is rolling back AI rules to compete with the US. The EU is loosening regulations it spent years designing. The cost of being the world’s regulator turned out higher than the political cost of rewriting the rules. (Read more)

5. Publishers are facing record AI bot and scraper activity. Third-party scrapers are increasingly hitting publisher sites to feed LLM pipelines, with little traffic returning. It’s the same dynamic as the lead essay, viewed from the supply side: AI is extracting value from the open web faster than it returns visits. (Read more)

6. Lovable has introduced built-in payments powered by Stripe and Paddle/It allows users to monetize apps through subscriptions and one-time payments directly via chat prompts. (Read more)

BIG PICTURE

This week we feature research in two important areas: AI search visibility and AI adoption enterprise priorities

What the Research Says Drives AI Search Visibility

When an AI summary appears on Google, users click a traditional result 8% of the time, down from 15% (Pew, July 2025). About 60% of searches now end without a click (Bain, February 2025). Zero-click rates rise to 83% when AI Overviews are present (Similarweb). Being mentioned in the AI answer is becoming the new unit of brand presence.

Five studies have now tried to measure what drives brand visibility in AI answers:

Ahrefs analyzed 75,000 brands

Profound analyzed 680 million AI citations

Similarweb broke down six consumer sectors (March)

Seer ran 10,000 questions through GPT-4o

Digital Bloom synthesized the field

The core finding: engines converge on brands more than they converge on sources.

Ahrefs measured a 0.75 -0.82 correlation across engines on which brands they mention. Digital Bloom reports only ~11% domain overlap between what ChatGPT and Perplexity cite. Different measurements, same direction.

The brands that win are predictable:

Highest Google rankings (Seer: 0.65 correlation with GPT-4o mentions in finance and SaaS)

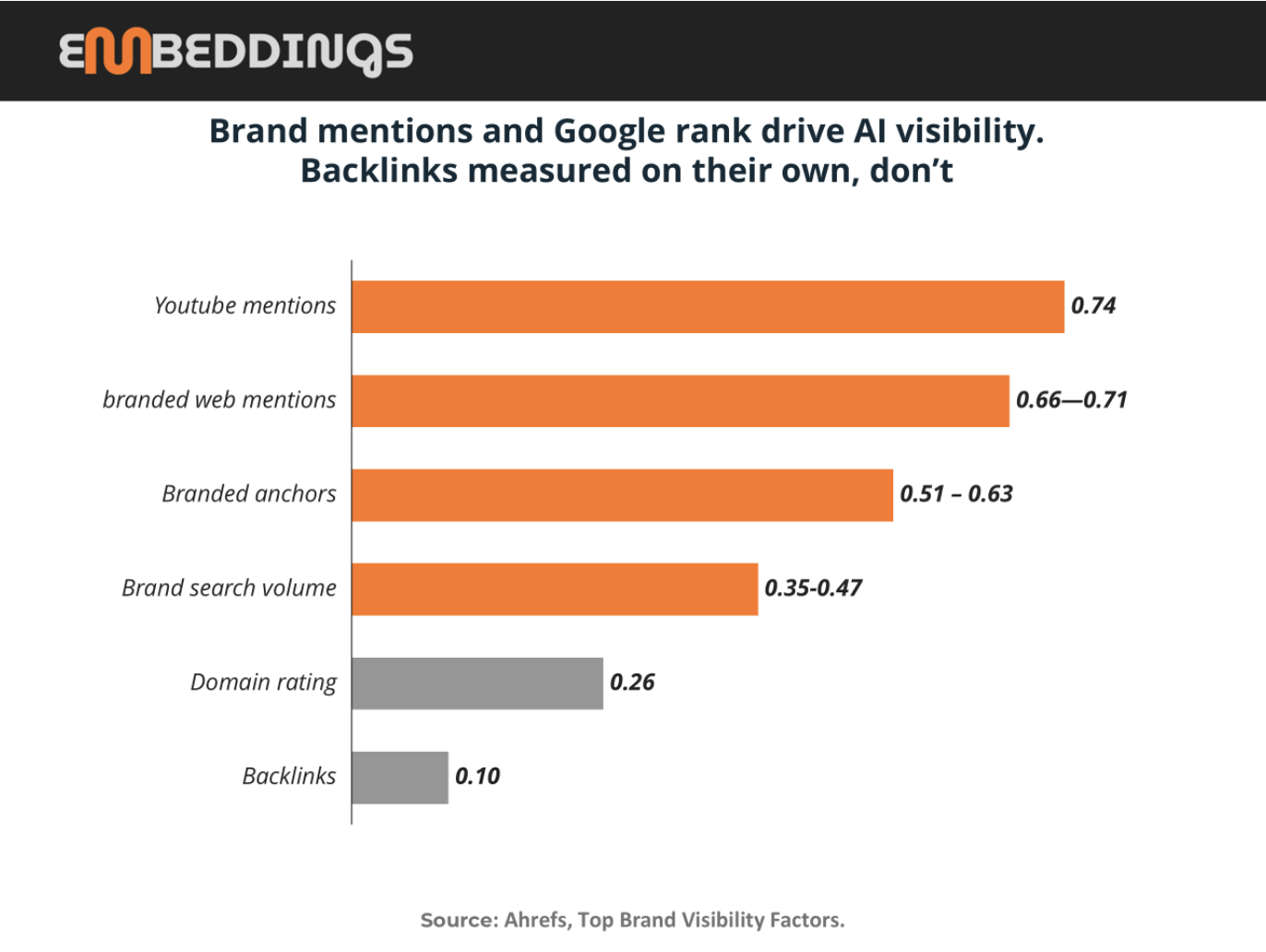

Most branded web mentions (Ahrefs: 0.66 - 0.71)

Strongest YouTube presence (Ahrefs: 0.737 - the strongest single factor)

Backlinks don’t matter directly. Ahrefs and Seer both found this independently.

Most GEO vendors are selling engine-by-engine tactics. The research says the engines are more alike than different.

AI Priorities Shift by Stage (Predictably)

When organizations first deploy AI, success metrics are defensive: don’t break anything, reduce costs, manage risk. That’s the right starting point,but those metrics stop being the ones that matter surprisingly early.

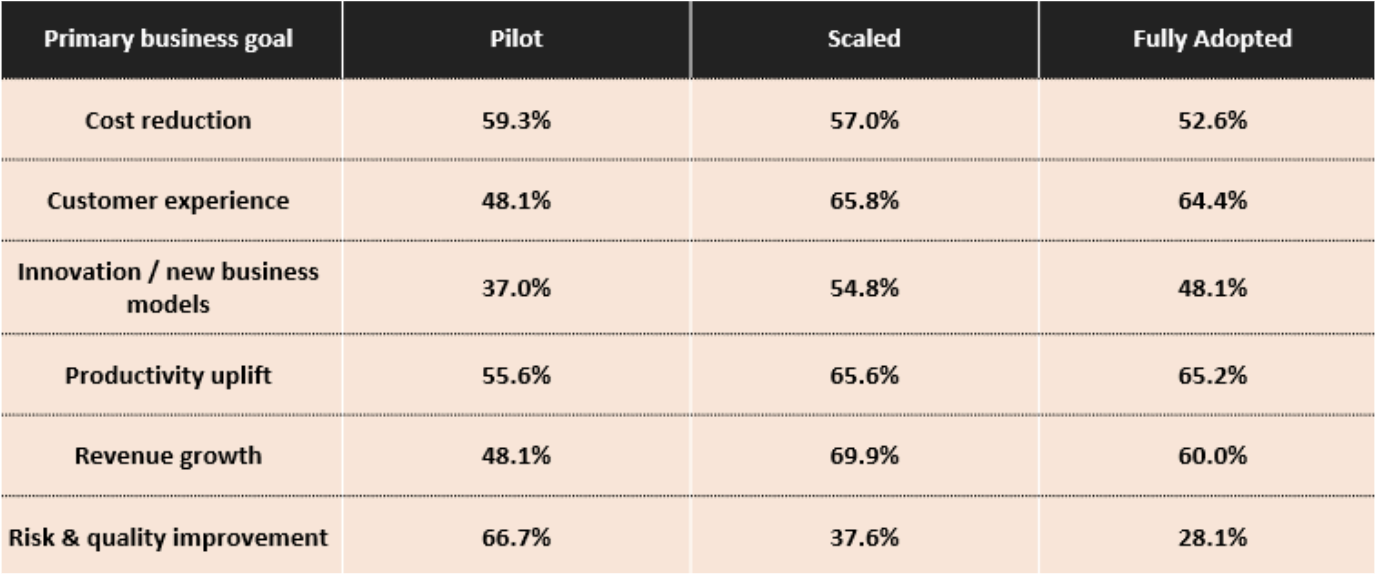

A recent maturity survey tracked how AI goals shift across three stages: pilot, scaled, and fully adopted.

At the pilot stage, 66.7% of organizations prioritize risk and quality

By full adoption, only 28.1% do,the largest shift in the dataset

Revenue growth moves the opposite direction:

48% at pilot

70% at scaled

60% at full adoption

Customer experience and productivity both exceed 64% in mature stages

Practical takeaway: AI programs have an invisible graduation deadline. If you keep evaluating mature programs with pilot-stage metrics, they look like they’re plateauing when they’re actually progressing.

What the Research Says Drives AI Search Visibility

Why this matters

The click economy is weakening, and the data is consistent across sources. About 60% of searches now end without a click (Bain, February 2025). When an AI summary appears on Google, users click a traditional search result 8% of the time, roughly half the 15% rate when no AI summary is shown (Pew Research, July 2025, based on 68,879 tracked queries from roughly 900 US adults). The effect compounds when AI Overviews are present, specifically: zero-click rates rise from about 60% on non-AIO searches to around 83% on AIO searches (Similarweb, May 2025).

The traffic that does come through AI-referred channels looks different. Adobe analyzed more than one trillion visits to US retail sites and found AI-referred visitors had a 23% lower bounce rate and viewed 12% more pages per visit than other traffic (Adobe, March 2025).

Two things follow. Visibility in AI answers matters more than it did a year ago, because fewer of those answers result in a click. And when clicks do happen from AI sources, they tend to be higher intent. Being mentioned in the answer, whether the user clicks or not, is becoming the new unit of brand presence.

Five studies have now attempted to measure what actually determines whether a brand appears in AI-generated answers. Ahrefs analyzed 75,000 brands across ChatGPT, Google AI Mode, and Google AI Overviews. Seer ran 10,000 finance and SaaS queries through GPT-4o. Profound examined 680 million AI citations between August 2024 and June 2025. Similarweb’s March 2026 report broke brand visibility down across six consumer sectors, and Digital Bloom synthesized the broader field.

Taken together, this is the most comprehensive evidence base we have.

What the studies agree on

Across all five studies, one conclusion stands out clearly: brand strength is the primary driver of AI visibility.

The strongest observed relationships:YouTube mention volume shows a 0.737 correlation with AI visibility in the Ahrefs dataset.

2. Branded web mentions fall in the 0.66–0.71 range.

3. Google page-one rankings correlate at 0.65 with GPT-4o mentions in finance and SaaS, according to Seer.

These are large effects by any standard. But they are better understood as reflections of existing brand strength than as levers you can directly pull. The brands that show up in AI answers are, overwhelmingly, the brands that were already dominant elsewhere.

Similarweb’s March report illustrates how this dynamic concentrates at the extremes. In its electronics prompt set, Apple appears in 54% of AI responses (more than any other brand by a wide margin). Similarweb describes this as a “structural dominance” effect: when a brand leads across mentions, video presence, and review coverage, AI visibility compounds that lead rather than redistributing it. The finance category shows the inverse case. NerdWallet appears far more frequently in AI answers than in Google search rankings for the same queries, outperforming its SERP position by dozens of spots. Its underlying brand strength,across mentions, YouTube presence, and likely training data representation, remains intact even after Google’s algorithm updates reshaped rankings. AI systems continue to surface what brand strength predicts. In that sense, brand strength is a more stable driver of visibility than Google rank itself.

A second consistent finding is that AI engines agree more on which brands matter than on which sources to cite. Ahrefs finds a 0.75-0.82 correlation across ChatGPT, Google AI Mode, and AI Overviews in the brands they mention. At the same time, Digital Bloom reports that only about 11% of cited domains overlap between ChatGPT and Perplexity. These are different lenses on the same phenomenon. At the brand level, outputs converge. At the source level, they diverge. Engines construct answers from different materials, but they tend to resolve to the same set of brands. If your brand is strong, you appear across systems; influencing which specific page or domain gets cited is a narrower, secondary problem.

The third area of agreement is more counterintuitive: backlinks do not appear to drive AI visibility directly. Both Ahrefs and Seer arrive at this conclusion independently. The nuance is that backlinks still matter upstream. They contribute to Google rankings, and Google rankings correlate meaningfully with AI mentions. But when backlinks are isolated as a standalone variable, their signal disappears. Their effect is already embedded in broader measures of authority, visibility, and brand presence.

What the studies can’t tell you

Despite the consistency of the findings, the evidence base has clear limitations. The datasets are concentrated in finance, SaaS, and general consumer categories.. Geographic coverage is also unclear: Similarweb focuses on the US, while other studies do not specify their regional scope.

There are also structural biases in the samples. Ahrefs, for example, pre-filters for domains with a rating above 40, which skews the dataset toward already established sites. Seer’s analysis is limited to a single model, GPT-4o, at a single point in time. More broadly, all of the studies are snapshots. AI systems continuously reweight sources and signals, often on a quarterly cadence, which introduces uncertainty about how stable these relationships are over time.

Most importantly, every finding is correlational. The data shows what moves together, not what causes change. The overall picture is directionally reliable, but it does not support precision tactics. Treating any single correlation as a direct lever would be a mistake.

What to do with it

The practical implications are relatively straightforward. First, the case for engine-specific monitoring tools weakens significantly. If brand mention patterns correlate at roughly 0.77 across systems, maintaining separate dashboards for each engine adds limited incremental value.

Second, the primary metric to track shifts from referral traffic to mention share. Similarweb reports that visits to AI platforms grew 28.6% year-over-year, while outbound referral traffic remained flat. As interaction consolidates within AI interfaces, visibility is less about clicks and more about presence within the answer itself.

Finally, the strategy implication is not technical but foundational: invest in being a brand that is worth mentioning. That means building topical depth, earning coverage on platforms that AI systems already ingest, and producing content that functions as a reference rather than just a ranking asset. The systems are not particularly subtle in what they reward. They surface what already stands out.

Sources

Ahrefs, Top Brand Visibility Factors (75,000 brands)

Seer Interactive, What Drives Brand Mentions in AI Answers

Similarweb, 2026 Generative AI Brand Visibility Report

Profound, AI Platform Citation Patterns (680M citations, Aug 2024–June 2025)

Digital Bloom, 2025 AI Citation & LLM Visibility Report