From Attention to Neural Prediction

Prediction is accelerating. Meta open-sourced a model that predicts brain response to video, audio, and text. And it's not just measurement that's moving, the surfaces where predictions get monetized are expanding too. ChatGPT's ad business crossed $100M in under two months. But the pushback is arriving just as fast. A jury found Meta negligent in the first social media addiction trial. Wikipedia banned AI-written articles. Reddit started labeling bots. The consumer journey is changing faster than the guardrails can follow. This issue: what Meta's TRIBE v2 – a brain-response model built on the same kind of embeddings that power ad targeting, marketing embeddings, literally means for anyone who measures advertising.

Vas

Marketing × AI

ChatGPT's Ad Business Hit $100M Run Rate in Under 60 Days

OpenAI's advertising pilot crossed $100 million in annualized revenue within two months of launch. The constraint isn't advertiser interest, it's available ad slots. (Read more)

Meta Found Negligent in Landmark Addiction Trial

A Los Angeles jury found Meta and YouTube negligent in the first social media addiction case to go to trial – Meta held 70% responsible. The case is a test for roughly 2,000 pending lawsuits. Separately, a New Mexico jury ordered Meta to pay $375M for failing to protect children from online predators.

Reddit Labels Its Bots, Keeps Its AI Posts

Reddit is testing bot labels, passkey verification, and optional World ID scans to separate real users from automated accounts. AI-generated posts will still be allowed, but the platform is building infrastructure to flag synthetic activity. (Read more)

AI at Large

Wikipedia Bans AI-Written Articles

English Wikipedia has formally banned AI-generated articles. Models can assist with translation and refinement, but AI-authored content doesn't meet the encyclopedia's standards for sourcing, neutrality, and verifiability. (Read more)

The U.S. Government Wants to Teach You AI, Over Text

The Department of Labor launched "Make America AI-Ready," a free 7-day AI literacy course delivered via text message. The target: workers in industries facing automation. (Read more)

FROM THE DESK

This issue is about measuring human response – what your brain does when it sees an ad, and whether AI can predict it. Here's a related question: what is AI doing to your own thinking? I built The Pulse, a 45-second weekly check-in that tracks how AI shapes your cognition, curiosity, and sense of meaning at work. Seven questions, research-backed. Take it this week and I'll report the first aggregate findings in the next issue.

THE DEEP DIVE

Your Brain on Ads: What Meta's TRIBE v2 Means for Marketing

A model that predicts how 700 brains respond to video, sound, and language, built on embeddings.

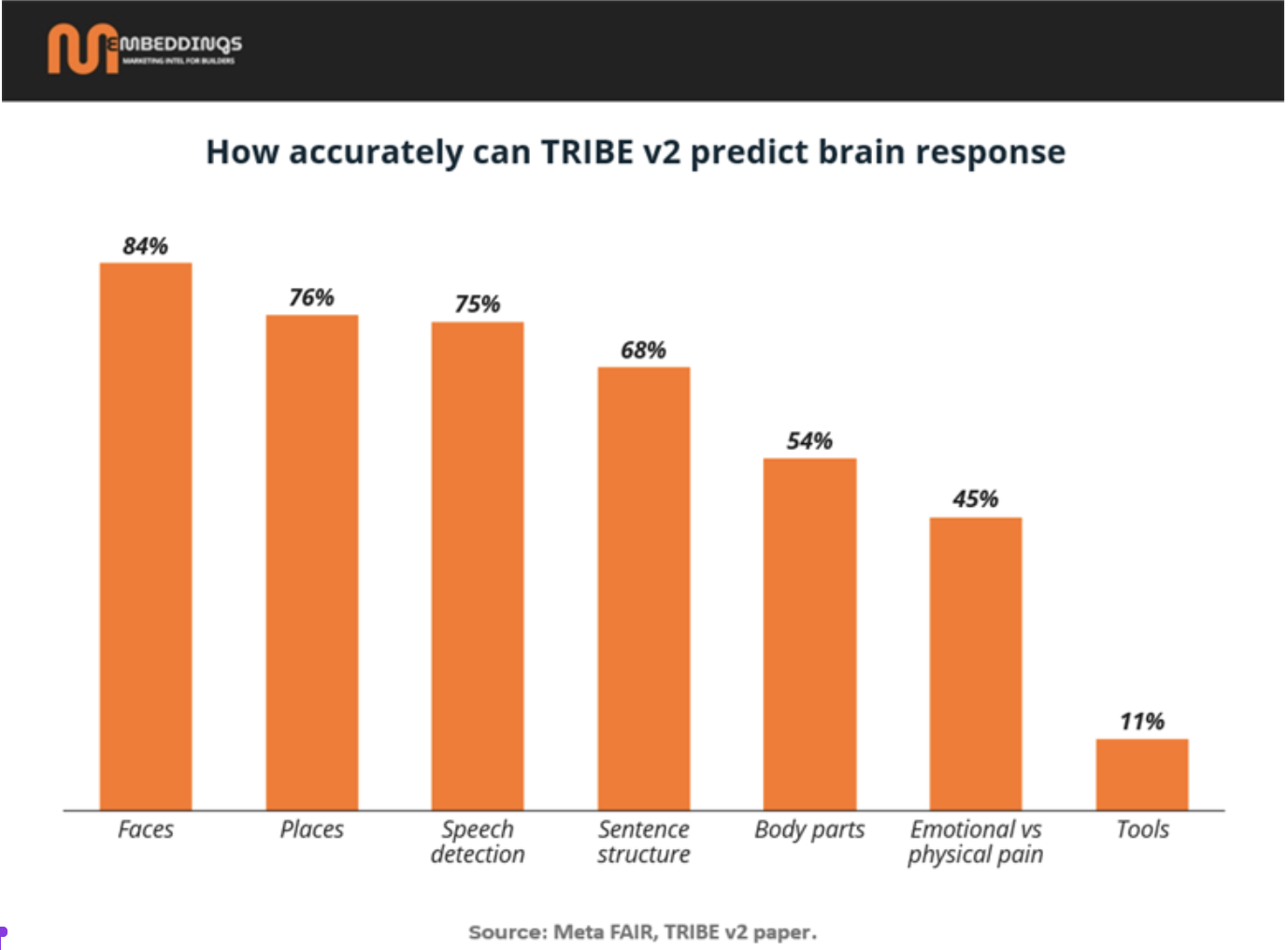

What happened. On March 26, Meta's FAIR lab releasedTRIBE v2, a model trained on fMRI scans from more than 700 people watching movies, listening to podcasts, and reading text. It predicts brain response across 70,000 voxels, a 70x increase over TRIBE v1, which trained on just four subjects and stillwon the Algonauts 2025 competition, beating 263 teams.

V1 to v2. TRIBE v1 was a proof of concept: four people, ~1,000 voxels, one modality. TRIBE v2 is 700+ subjects, tri-modal (video, audio, language), and capable of zero-shot prediction, it can predict brain responses for people it has never scanned, in languages it was never trained on. Meta says its zero-shot predictions are sometimes more accurate at estimating group-averaged brain responses than the recordings of individual subjects.

Neuromarketing before TRIBE. The field goes back two decades. The study that put neuromarketing on the map, Montague's Pepsi vs. Coke fMRI experiment at Baylor College of Medicine, was published in Neuron in 2004. Companies like Nielsen Consumer Neuroscience, Neurons Inc, and Realeyes have built commercial products around EEG, eye-tracking, and facial coding. Some of this work has been rigorous: Nielsen's 2012 study testing 60 FMCG ads with combined EEG, skin conductance, and facial expression data produced real predictive signal. Neurons Inc has peer-reviewed work on visual attention and ad recall, and their AI now predicts attention heatmaps with 95%+ accuracy against real eye-tracking panels.

The limitations are real but specific. Sample sizes have been small. The gap between measuring attention and predicting purchase behavior has never been fully closed. And some vendor studies lacked independent replication, which made it harder for the rigorous work to get credit. The science is sound. The challenge has been scale and connecting the neural signal to business outcomes.

The question is not whether AI can predict neural response to creative. It can. The question is whether neural response predicts anything a CMO would pay for.

What TRIBE v2 changes. Two things.

First, scale. Instead of wiring up 30 people in a lab, you simulate how 700 brains respond to a piece of creative, computationally, in minutes. A creative team could upload 50 ad variants and get a neural response score on each one before any media spend. The model exists. The applied layer connecting it to a creative workflow does not, yet.

Second, direction. If fMRI-level simulation can eventually be distilled into reliable marketing metrics – attention, emotional response, recall, then the pre-test and the scoring layer collapse into one step. Today, creative testing and media buying are separate workflows. A model that scores creative at the point of upload and routes it to the right audience could merge them.

But the distance is real. There is an important gap between simulating brain activity and predicting the specific responses marketers actually pay for. fMRI is itself a noisy signal. Going from a 70,000-voxel brain simulation to a reliable metric like ad recall or purchase intent adds layers of translation that do not exist yet. As one neuroscientist put it: the simulation approach is better suited, for now, to research and medical applications. For marketing, the more practical path is to establish the right metrics first (attention, emotion, brand recall) and then train models that predict those directly. That is, in fact, what companies like Neurons already do.

Could this eventually reach the ad stack? Creative scoring already runs in real-time programmatic systems – attention prediction, engagement models, dynamic creative optimization all operate at auction speed today. TRIBE v2 is not there. But it aligns AI embeddings, the same math that powers those real-time systems – with recorded brain activity. If someone builds a compressed scoring layer on top of that alignment, it could eventually feed into the same pipes. If Meta builds that bridge, one company would own the social graph, the ad auction, and the neural response model. That is speculative, not imminent, but it is worth watching because the building blocks are now open-source.

The hard questions. Predicting where someone looks is the easy part. Emotional arousal is getting closer. But the causal link between "this ad activated this pattern in the temporal cortex" and "this ad drove incremental sales" has not been closed. TRIBE v2 can tell you what the brain does. It cannot tell you what that means for a P&L. The companies that close that gap will define how creative gets scored next.

The license. CC BY-NC, non-commercial use only. Researchers can use it freely. Anyone building a commercial product needs a separate deal with Meta. The model is open. The business rights are not.

COMPANY PROFILE

Neurons, Predicting What Your Eyes Do Before You Do

Neuromarketing has a scaling problem. Lab-based studies are rigorous but slow and expensive.Neurons (founded 2013, Copenhagen, €6M raised, $13.4M revenue) built an AI product that skips the lab entirely.

Founded by neuroscientist Dr. Thomas Zoëga Ramsøy, Neurons started as a consultancy running EEG and eye-tracking studies for IKEA, Estée Lauder, and Visa. In 2020 they launched Neurons AI. Upload an image or video, get an attention heatmap in seconds.

How it works: The AI is trained on eye-tracking data from 20,000+ participants across ~200 machine learning models. Upload a creative asset and the system returns a heatmap that Neurons claims is equivalent to showing the image to 100-150 real people, with 95%+ accuracy. It predicts where eyes go, how long they stay, and how much brand attention the first two seconds capture.

Traction: Clients include Chanel, Prada, Mars, Dentsu, Google, Tesco, and Lowe's. One media agency reported a 43% average increase in client CTRs using Neurons' scores to filter creative before launch. Neurons also offers an API for embedding its scoring into third-party platforms, with Brandwatch as one public integration. Three consecutive Børsen Gazelle growth awards. Former Danish PM Helle Thorning-Schmidt on the board.

The caveat: Neurons predicts attention, not sales. The 95% accuracy is against eye-tracking panels, it tells you where someone will look. Whether looking translates to buying is a different question the entire field has not fully answered.

The bigger question is where neuro data fits in a consumer journey that is changing fast. Attention prediction on a static ad is one application. But what about scoring a product page in real time as a shopper browses? Optimizing a CTV ad mid-flight based on predicted emotional response? Filtering AI-generated creative variants before they enter a programmatic auction? The data exists. The models are getting faster. The question is which layer of the marketing stack absorbs them first