When AI Becomes the Interface, Who Controls Outcomes?

Good morning,

This week is about autonomy, monetization, and who ultimately controls the AI interface.

In News, AI agents continue their march from experiment to infrastructure. Anthropic opens Claude Cowork to all Pro users, Google suggests we’ve been prompting models the wrong way entirely, and new research from both Anthropic and OpenAI sheds light on how people actually use AI, and where it breaks down. Replit collapses app creation into a single mobile workflow, while Airbnb hires Meta’s former generative AI chief, hinting that consumer platforms may be ready to lean in harder.

In Big Picture, we examine a defining fork in the road: OpenAI moves toward ads, Google moves toward personal intelligence. ChatGPT begins testing sponsored content to help fund its compute bill, while Gemini embeds itself deeper into users’ lives (photos, email, search) while claiming to keep ads at arm’s length. Different models, same question: where does monetization end and influence begin?

And in Company in Focus, we take a hard look at Alembic’s bold claim that causal AI can replace marketing experiments. It’s a serious bet, backed by serious capital, but one that still demands proof.

The interface is shifting. The business models are diverging. And the assumptions marketers relied on are being challenged.

- The Marketing Embeddings Team

NEWS

Anthropic opens up its Claude Cowork feature to anyone with a $20 subscription

Claude Cowork is now available to anyone subscribed to Anthropic’s Pro plan. Like other AI agents, the novelty of Claude Cowork is its ability to work on its own. (Read More)

We’ve been going about prompting all wrong, according to Google’s latest paper (Read more)

Anthropic released new economic primitives mapping how Claude is used across tasks, regions, and skill levels. The data shows uneven adoption, high success on simple tasks, and clear signals for job exposure. (Read more)

How people use ChatGPT – analysis of OpenAI’s first user study: This paper, How People Use ChatGPT, represents the company’s attempt to document patterns of adoption. (Read More)

Replit launched mobile app creation, letting users build, test via QR code, and publish directly to the App Store from a single platform. (Read more)

Airbnb appointed Ahmad Al-Dahle as Chief Technology Officer, bringing in Meta's former head of generative AI and the leader behind the Llama model family. Will Airbnb finally lean in AI? (Read more)

BIG PICTURE

Two Roads Diverge: OpenAI Goes Ads, Google Goes Personal

Last week brought two announcements that reveal fundamentally different visions for AI's business model—and its role in our lives.

What We Know

OpenAI is putting ads in ChatGPT. Starting in the coming weeks, logged-in US adults on the free and "Go" tiers will see sponsored content at the bottom of answers when there's a relevant product or service. Plus, Pro, and business customers won't see ads. OpenAI says ads won't influence answers, conversations stay private from advertisers, and users under 18 won't see them. Topics like politics, health, and mental health are off-limits for ad placement.

The move marks a philosophical shift. Sam Altman previously opposed advertising; now he cites Instagram as proof that ads "can be useful if handled carefully." With $1.4 trillion in infrastructure commitments and a $20B revenue run rate, the math is clear: subscription revenue alone won't cover the compute bill.

Google, meanwhile, introduced Personal Intelligence for Gemini -and made a point of saying it has no plans to put ads in the Gemini app. Personal Intelligence lets users connect Gmail, Photos, YouTube, and Search in a single tap. Gemini can now reason across your data: find your license plate from a photo, recall a hotel you stayed at five years ago, suggest tires after noticing your road trip pictures.

Note what Google said: no ads *in Gemini*. What they didn't say: that Gemini data won't inform ads elsewhere. When Personal Intelligence knows you're planning a road trip from your photos and searching for hotels in your Gmail, does that signal stay siloed—or does it flow into the ad targeting system that powers Google's $300B business? The privacy claim is narrower than it sounds.

What We Don't Know

On ChatGPT ads:

How will "relevance" be determined without using conversation data?

Will sponsored results get preferential treatment despite official denials?

What happens when an ad conflicts with the best answer?

At what point does a "helpful suggestion" become an ad?

On Personal Intelligence:

If not ads in Gemini, what's the monetization path?

Will these signals remain separate from Google's ad infrastructure—and if so, for how long?

What happens when Gemini knows more about you than you remember about yourself?

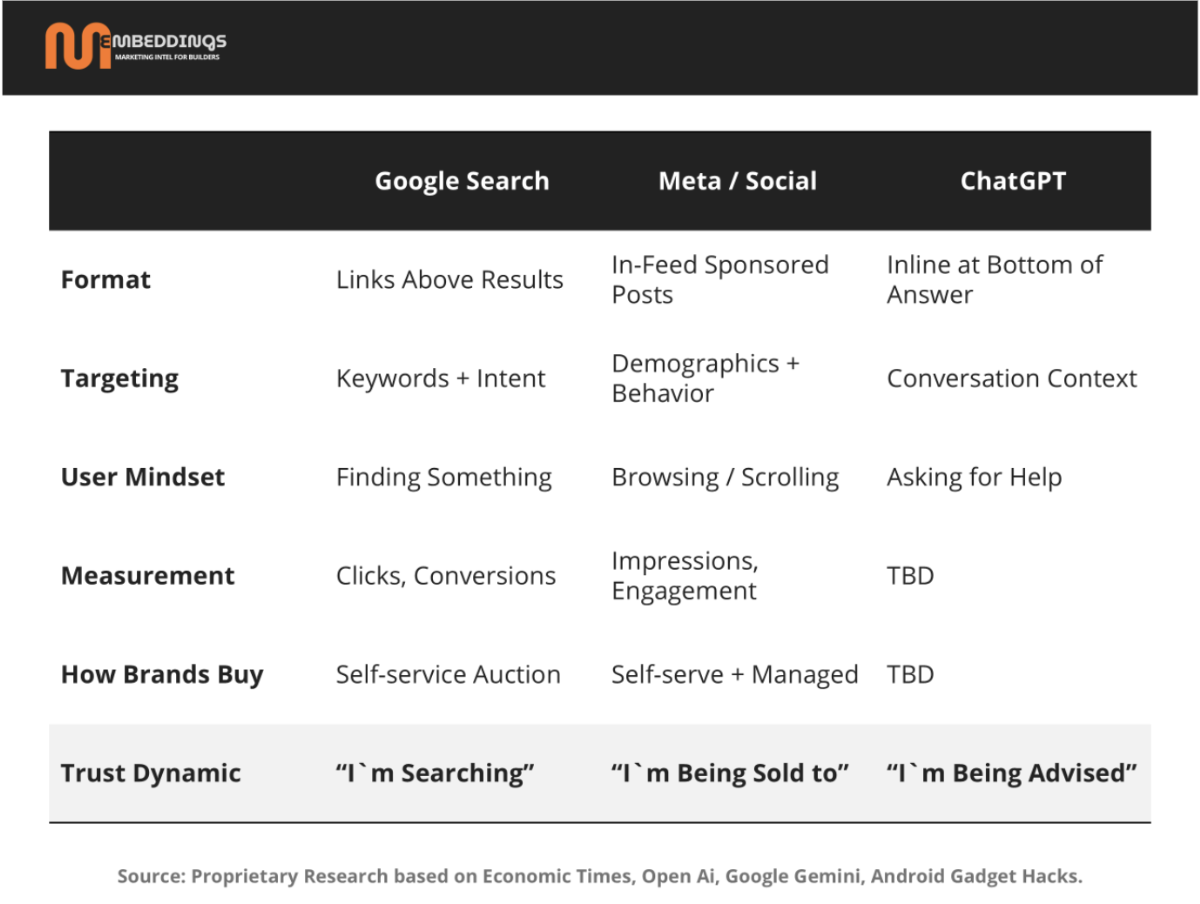

On the emerging ad ecosystem:

What formats will AI-native ads take? Inline recommendations? Sponsored reasoning? Something we haven't seen yet?

How will brands buy? Self-serve auction like Google Ads—or premium direct sales like early podcast sponsorships?

Who captures the value? The AI platforms, the agencies who figure it out first, or the brands willing to experiment?

And what does "performance" mean when there's no click—just an AI telling someone what to buy?

Why These Questions Matter

We're not ready to make predictions. But the questions themselves reveal what's at stake.

If ChatGPT ads work, every AI assistant will follow—and the line between "answering your question" and "serving you a recommendation" will blur permanently. If Google's Personal Intelligence data feeds the ad machine, then "no ads in Gemini" is a distinction without a difference. And if brands can't measure what's working, the first movers will be flying blind—but they'll also be the ones who figure it out.

The next 12 months will show us whether AI becomes the most intimate advertising channel ever built—or something else entirely. The answers aren't clear yet. But the questions we're asking now will determine what we're willing to accept later.

Read More:

Company in Focus: Alembic, Can Causal AI Replace Marketing Experiments?

Alembic is making one of the boldest bets in marketing measurement right now. The company just raised $145 million at a $645 million valuation, with Accenture and Jeffrey Katzenberg’s WndrCo backing the round. They’ve reportedly built a supercomputer-scale NVIDIA DGX cluster delivering ~256 petaflops of compute. Their customer list includes NVIDIA (a founding customer), Delta Air Lines, and Mars. This is not a science project, it is a serious commercial push.

The thesis is provocative: experiments may no longer be necessary.

Instead of running geo-tests, holding out audiences, or waiting weeks for incrementality readouts, Alembic claims it can infer causal impact directly from observational data. Feed in CRM, ad platforms, TV, podcasts, PR, and social, and their system estimates what actually caused revenue.

Technically, Alembic says it combines Spiking Neural Networks with causal inference methods to answer the counterfactual question: what would have happened if we hadn’t done this? That framing matters. Attribution asks which touchpoint deserves credit. Causality asks whether the marketing changed the outcome at all.

If this works as advertised, the implications are enormous. Privacy has weakened identity-based attribution. Experiments are slow, expensive, and operationally painful. Causal inference is a legitimate scientific field. And the scale of the computer plus the caliber of customers suggests this is not vaporware.

But here is the tension.

There is no publicly available validation. No peer-reviewed work. No third-party audits. No clear head-to-head comparisons against randomized holdouts. In measurement, that absence matters. Observational causal inference can work, but only under strong assumptions: correct causal structure, no major unmeasured confounders, and models that reflect reality. When those fail, correlation quietly puts on a “causal” costume.

So the real question is not whether causal AI is clever. It is whether observational models can replace experiments as the source of truth.

If yes, attribution, MMM, and incrementality testing are about to be rewritten.

If no, this becomes an extraordinarily expensive way to generate high-powered correlations with a causal label.

Right now, the honest answer is simple: we do not know.

For claims this large, “trust us” is not enough. What would move the market? Published validation versus randomized experiments. Transparent assumptions and failure modes. Third-party audits. Case studies showing uncertainty, not just headlines.

Fascinating bet. But I am not ready to retire the holdout test just yet.

These SaaS stocks aren’t dropping just on macro. AI coding agents like Claude Code are compressing the SaaS model, making software cheaper to build, easier to replicate, and harder to defend. Markets are repricing SaaS for an AI-first world.